Methodology

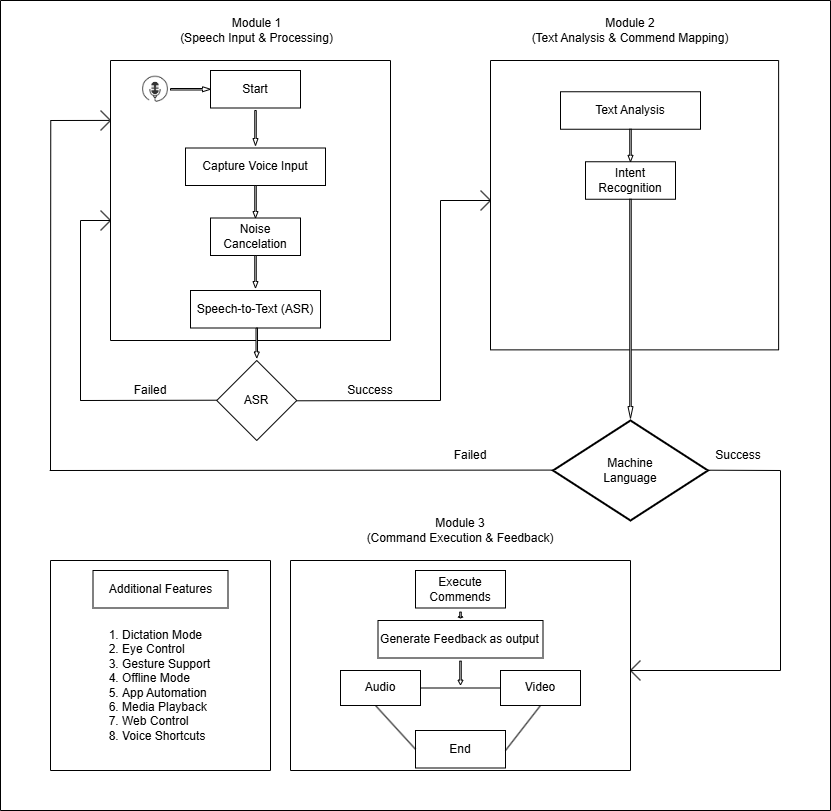

Our project aims to provide a voice-controlled system "SONIX" tailored specifically for individuals with physical disabilities who have lost the ability to use their hands due to congenital conditions or accidents. The methodology is divided into three key phases: Input, Processing, and Output.

Input Phase

- Voice Capture: External microphone for high-quality audio signals

- Noise Handling: Real-time noise cancellation and filtering

- Multi-language Support: Accommodates diverse accents and languages

Processing Phase

- Speech Recognition: ASR converts speech to text

- Intent Analysis: NLP interprets user commands

- Action Mapping: Commands mapped to predefined actions

- Offline Capability: Functions without internet dependency

Output Phase

- Dual Feedback: Audio (TTS) and visual confirmation

- Application Control: Hands-free operation of software

- Cross-platform: Works with Google Meet, Zoom, Office, etc.

Technical Stack

- Frontend: HTML, CSS, JavaScript, ReactJS

- Backend: Python with MongoDB/MySQL

- Libraries: SpeechRecognition, PyDub, DeepSpeech

- APIs: Google Meet, Zoom, Microsoft Office